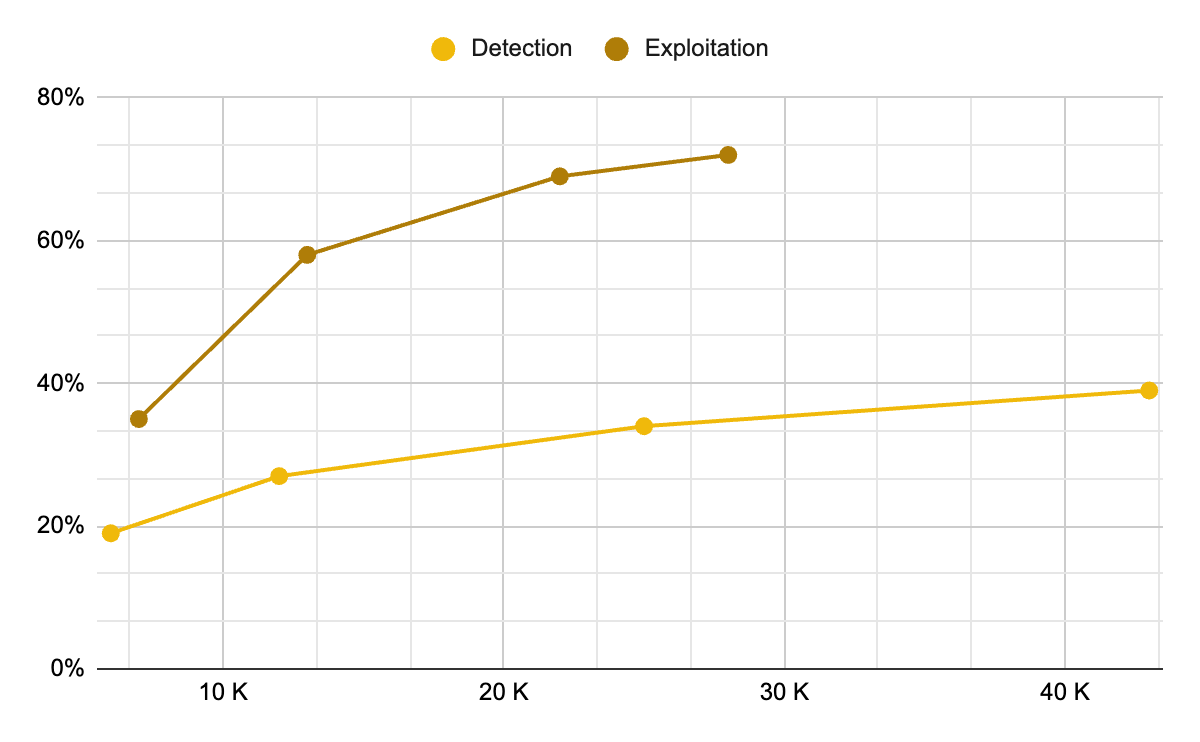

AI Is Twice as Good at Exploiting Smart Contracts as It Is at Detecting Vulnerabilities and the Gap Is Growing

-

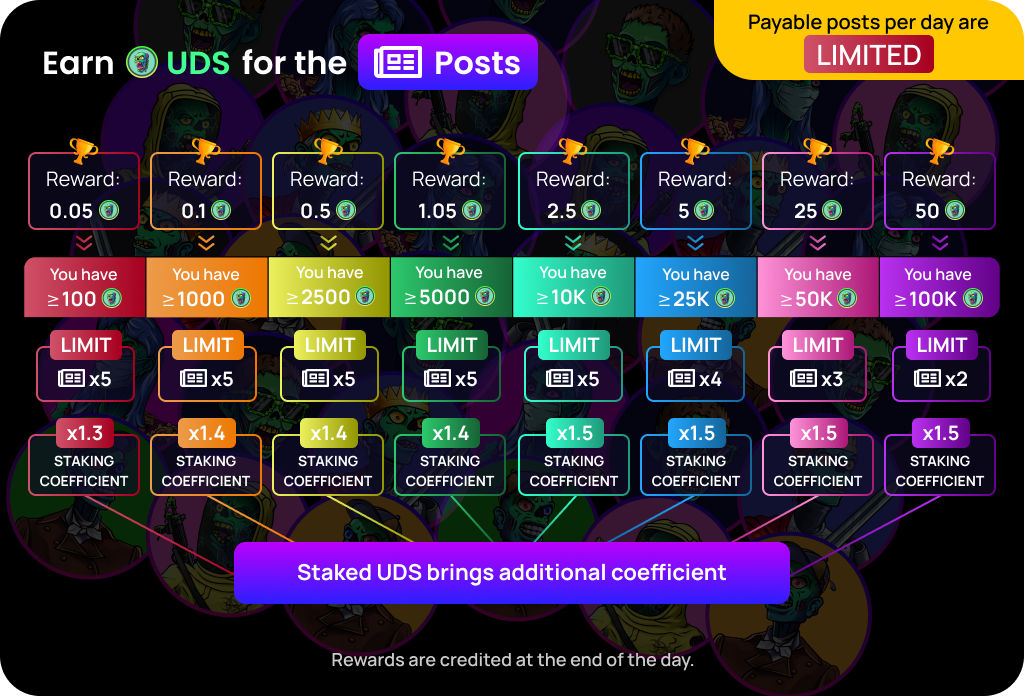

Binance Research has published data confirming what many DeFi security analysts have been warning about: AI tools are significantly more capable at exploiting smart contract vulnerabilities than at detecting them, and the gap is widening. GPT-5.3-Codex achieves a 72.2% success rate in exploit mode on the EVMbench benchmark, which tests AI agents against 117 curated high-severity vulnerabilities drawn from 40 audits. Its success rate in detection mode is roughly half that figure. The report's conclusion is direct: AI is currently twice as effective at exploitation as at detection, and the economics now favor attackers.The cost dynamics amplifying this problem are stark. AI-powered smart contract exploits currently average approximately $1.22 per contract, and Binance Research projects that cost will fall another 22% every two months.An attacker can scan thousands of open-source smart contracts in minutes at marginal cost, identify exploitable vulnerabilities automatically, and execute attacks at a scale and speed that human security teams cannot match reactively. Meanwhile, over 80% of developers now use AI in development according to Hacken's survey data, but fewer than 40% use AI for advanced security testing, creating a structural imbalance where the tools available to attackers are being applied more aggressively and more effectively than those available to defenders. Unless the adoption of AI in security testing catches up with its adoption in development, this offense-defense gap will continue to widen with every improvement in underlying model capability.