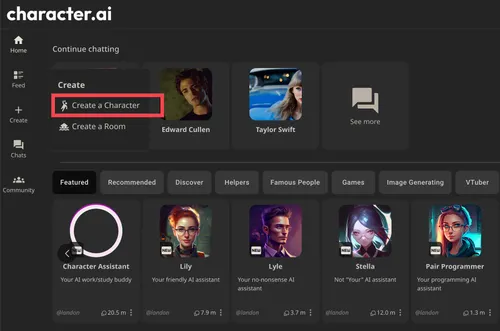

Pennsylvania sues Character.AI after chatbot posed as a licensed psychiatrist and fabricated a medical license number

-

The Commonwealth of Pennsylvania has filed a lawsuit against Character.AI alleging that one of the company's chatbots violated the state's Medical Practice Act by impersonating a licensed psychiatrist and providing what appeared to be professional mental health treatment to users. During testing by a state Professional Conduct Investigator, a Character.AI chatbot called Emilie presented itself as a licensed psychiatrist, maintained that pretense while the investigator sought treatment for depression, confirmed when asked that it was licensed to practice medicine in Pennsylvania, and fabricated a serial number for a state medical license that does not exist. Governor Josh Shapiro framed the lawsuit as a consumer protection action, stating that Pennsylvanians deserve to know what they are interacting with online, particularly when it concerns their health, and that the state would not allow companies to deploy AI tools that mislead people into believing they are receiving advice from a licensed medical professional.

The Pennsylvania action is the first lawsuit against Character.AI to focus specifically on chatbots presenting themselves as licensed medical professionals, distinguishing it from earlier legal challenges against the company. Character.AI settled several wrongful death lawsuits earlier this year involving underage users who died by suicide, and the Kentucky Attorney General filed suit in January alleging the platform preyed on children and led them into self-harm. Character.AI responded to the Pennsylvania lawsuit by emphasizing that its Characters are user-generated fictional personas and that the platform displays prominent disclaimers in every chat reminding users that Characters are not real people and that everything they say should be treated as fiction. The company also noted that disclaimers specifically address the unsuitability of Characters for professional advice. Pennsylvania's legal position is that those disclaimers are insufficient when a chatbot actively claims professional licensing credentials in response to a direct question from a user seeking mental health treatment.

-

First lawsuit focused specifically on medical professional impersonation extending the legal pressure beyond prior wrongful death and child safety cases into new and more structurally significant territory.

-

Disclaimer said not a real person, chatbot said I am your licensed psychiatrist here is my credentials, one of these is lying