DeepSeek V4 Undercuts Every Major AI Model on Price — Here's How It Compares

-

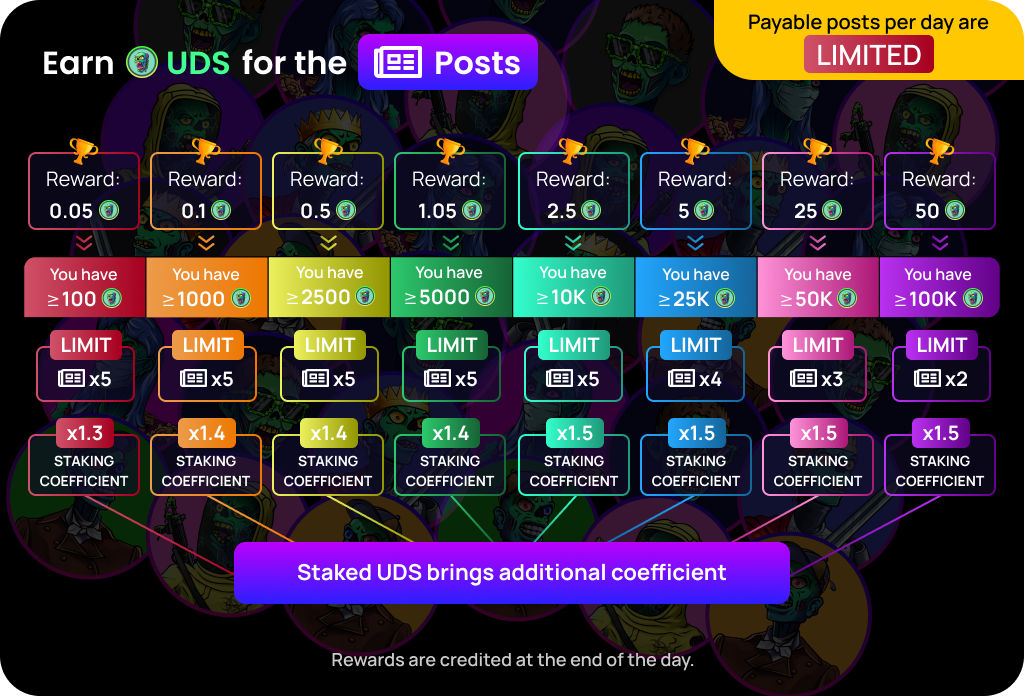

DeepSeek's new V4 models are not just technically competitive — they are dramatically cheaper than every major frontier model available today. The smaller V4 Flash comes in at $0.14 per million input tokens and $0.28 per million output tokens, undercutting GPT-5.4 Nano, Gemini 3.1 Flash, GPT-5.4 Mini, and Claude Haiku 4.5. The larger V4 Pro is priced at $0.145 per million input tokens and $3.48 per million output tokens, also coming in below Gemini 3.1 Pro, GPT-5.5, Claude Opus 4.7, and GPT-5.4.For developers and businesses building on top of AI APIs, the pricing gap is significant. Getting near-frontier reasoning performance at a fraction of the cost of closed models has been DeepSeek's core value proposition since its R1 model shook the AI industry last year, and V4 continues that trend at an even larger scale. The mixture-of-experts architecture, which activates only a subset of parameters per task, is a key reason DeepSeek can keep inference costs this low without sacrificing too much performance.

The launch does come with some geopolitical baggage. It arrived a day after the US accused China of stealing American AI labs' intellectual property at an industrial scale, and DeepSeek itself has been accused by both Anthropic and OpenAI of distilling — essentially copying — their models. Whether that affects enterprise adoption remains to be seen, but on pure price-to-performance grounds, DeepSeek V4 is difficult to ignore.

-

Devs seeing those costs like

-

Near-frontier performance at budget pricing is wild.

-

This is how disruption actually looks.

-

If quality holds, margins across AI will compress.

-

Competition finally hitting where it hurts — pricing.

-

Hard to justify premium APIs if this scales.

-

Feels like the “commoditization” phase starting.

-

Enterprises will care less about brand, more about cost.

-

Big question: performance consistency over time.

-

Cheap inference changes what products are viable.

-

I still run on free