Multiverse Computing Pushes Local AI as Alternative to Cloud Dependence

-

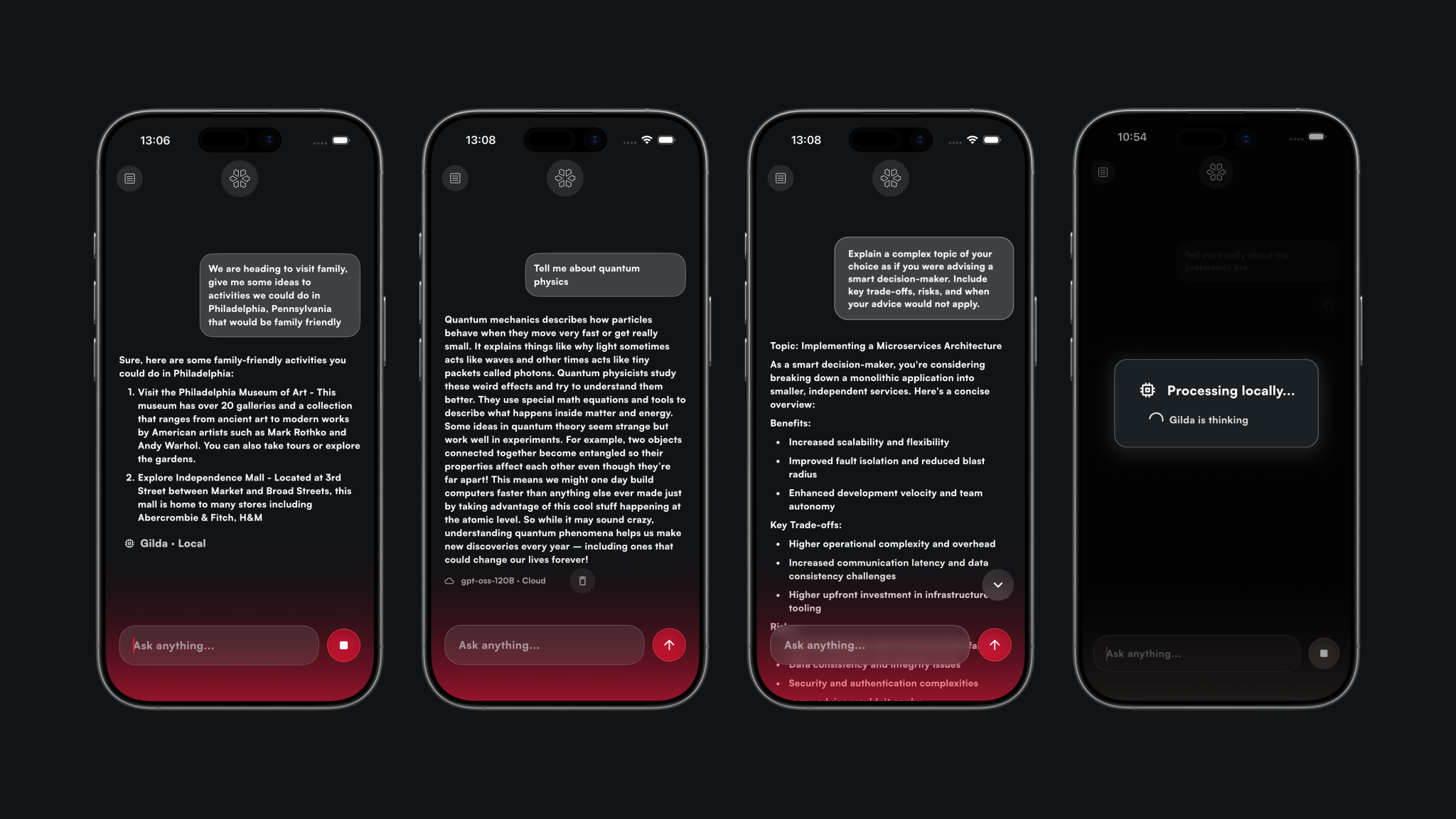

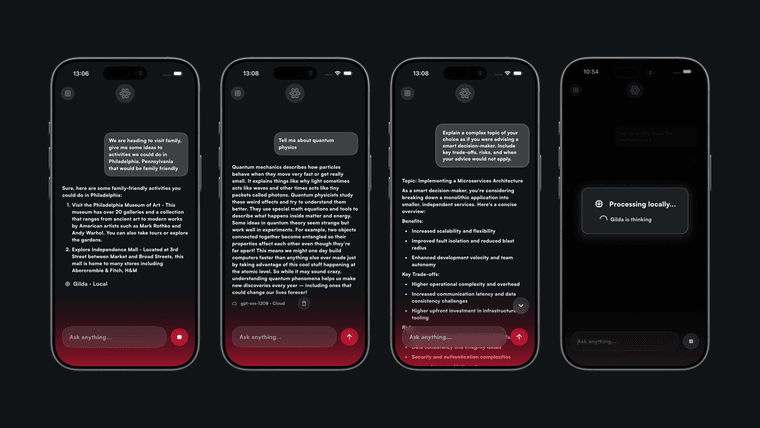

Multiverse Computing is promoting a shift toward smaller AI models that can run directly on devices, reducing reliance on cloud infrastructure amid growing instability in the AI supply chain.

The company recently launched its CompactifAI app and API portal, showcasing compressed models capable of operating locally—even offline in some cases. Its lightweight model “Gilda” is designed to run on-device, offering improved privacy and eliminating dependence on external compute providers.

The move comes as firms like Lux Capital warn businesses to secure compute agreements in writing due to rising risks across AI infrastructure providers.

-

Developers built the ladder and AI just pulled it up behind them